How to Verify Complex RISC-V–based Designs?

As RISC-V processor development matures and the core’s usage in SoCs and microcontrollers grows, engineering teams face new verification challenges related not to the RISC-V core itself but rather to the system based on or around it. Understandably, verification is just as complex and time-consuming as it is for, say, an Arm processor-based project.

To date, industry verification efforts have focused on ISA compliance in order to standardize the RISC-V core. Now, the question appears to be, How do we handle verification as the system grows?

Clearly, the challenge scales with multiple cores and the addition of off-the-shelf peripherals and custom hardware modules.

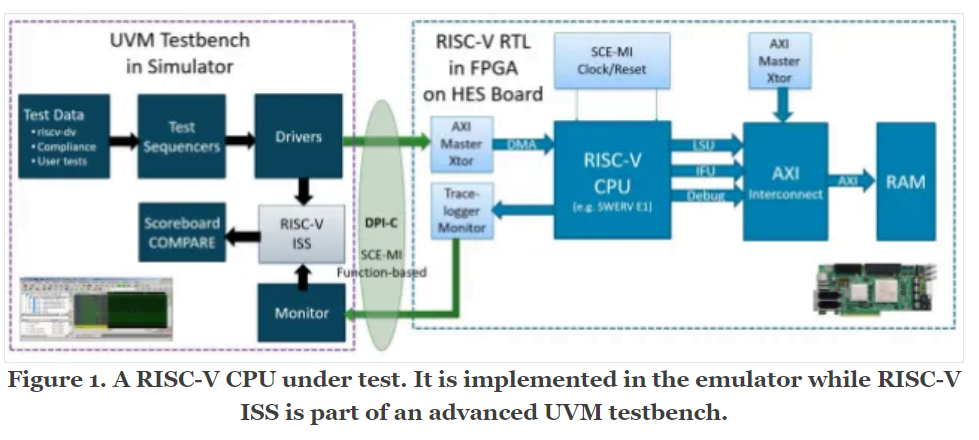

We can see two verification challenges here. Firstly, we need to ensure the core is correct and ISA compliant and, secondly, we need to test the system using the core. In both cases, transaction-level, hardware emulation is a perfect choice — particularly if the emulation is based on the Accellera SCE-MI standard, which allows for reusability between different platforms and vendors. Combined with automatic design partitioning and wide debugging capabilities, this makes a complete verification platform.

When the processor core becomes more powerful and brings in more functionality, register transfer level (RTL) simulation is not enough. Nor does it provide complete test coverage in a reasonable time. With emulation, the speed of testing is much higher (in the MHz), which — combined with the cycle accuracy — allows us to increase the length and complexity of tests (that run quickly).

When using emulation, the core itself might be automatically compared with the RISC-V ISS golden model to confirm its accuracy and that it meets ISA compliance requirements. Figure 1 shows a RISC-V CPU under test.

The testbench used during simulation can be reused for emulation, so it is worth making sure the testbench is “emulation ready” even at the simulation stage. This will enable a smooth switch between simulator and emulator without developing a new testbench.

This strategy will also pay off in the case of adding custom instructions to the RISC-V (instructions intended to accelerate algorithms in the design) because, with hardware emulation, it is possible to test and benchmark these instructions against developed algorithms faster than in a pure simulation environment.

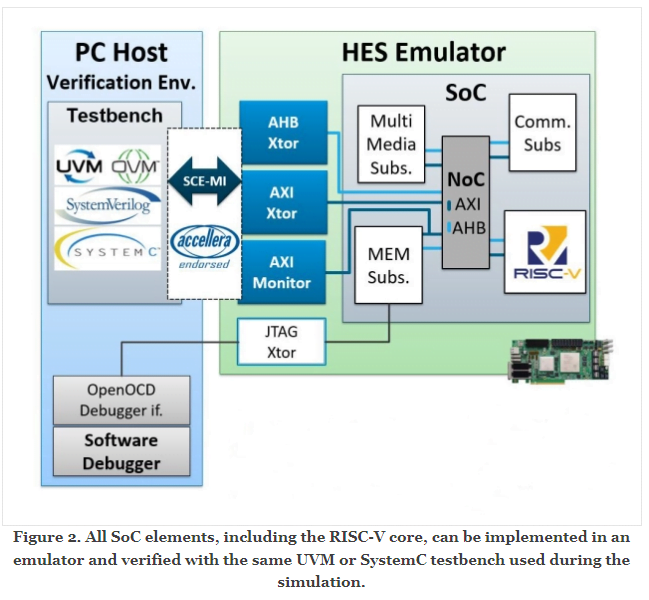

Once the processor or CPU subsystem is checked, we can move on to verifying the whole system. Thankfully, the same technique can be used for the verification of the other hardware elements of the SoC, custom hardware, and peripherals. All can be implemented in the emulator and verified with the same universal verification methodology (UVM) or SystemC testbench used during the simulation. See figure 2.

Such a methodology allows for long test sequences (UVM constrained random, for example) to build complicated test scenarios and accelerated SoC architecture benchmarking simulations in order to optimize the hardware structure and components.

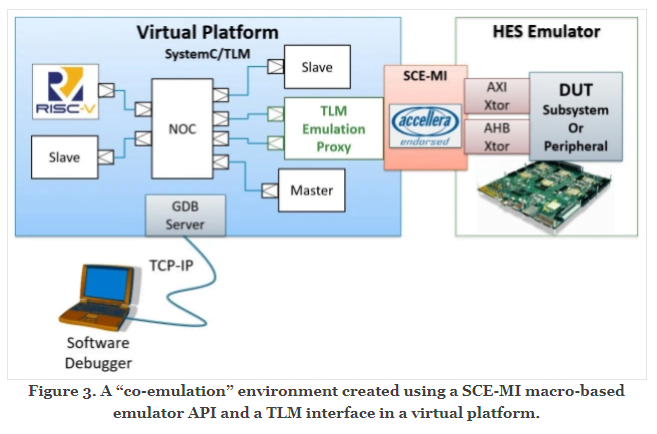

We need to remember though that SoC projects these days require not only hardware development but also complicated, multilayer software code. This means software and hardware engineering teams working on the same project with complex verification requirements and big challenges on the software-hardware interface. Software teams usually start the development in isolation, using software ISS or virtual platforms/machines, which tend to be enough when there is no reliance on interacting with the new hardware.

When the system grows with peripherals and custom modules, the software must support not only the RISC-V and its close surroundings (which can be modeled in software too) but also the rest of the hardware modules by providing operation system drivers, API, or high-level apps.

How do we make sure those two worlds can work together and be synchronized while developing and testing the whole project?

The solution is, again, transaction-level emulation. When using a hardware emulator, we can test all RTL modules at higher speed with flexible debugging functions, but there is even more: an emulator host interface API (usually C/C++ based) allows us to connect the virtual platform used by the software team to create one integrated verification environment for the software and hardware domains of the project. See figure 3.

Now, we can run the whole system at MHz speeds, which shortens the boot-up time of an operating system, for example, from hours to minutes and allows for parallel debugging of the processor and hardware subsystems.

The benefit of a hybrid co-emulation platform is that the software engineers don’t have to migrate to a completely different environment when the design’s RTL code matures. Their primary development vehicle is still the same virtual platform but, thanks to the co-emulation, it now represents the complete SoC including custom hardware. This way both software and hardware teams can work on the same revision of the project and verify the correctness and performance of the design without waiting on each other.

What about FPGA prototyping you might ask, Why not just do that? The answer is quite simple, prototyping requires the whole RTL source code to be ready and synthesizable to FPGA for all system elements, which takes a lot of time, so the software team needs to work on virtual models alone.

Even when the whole RTL design is ready, providing the prototyping hardware to all software developers might be quite costly. Therefore, using the co-emulation approach allows us to not only verify the whole system and discover potential problems much earlier in the project development cycle but also optimizes the cost of the verification tools.

Moreover, due to more comprehensive hardware debugging tools in emulation, any flaws or bugs in the RTL code can be easily diagnosed (yet another advantage of early hardware–software co-verification),

without rolling back to simulation. Once this is done, FPGA prototyping can certainly be extremely useful for high speed final test.

Courtesy : https://www.eetimes.com/how-to-verify-complex-risc-v-based-designs/